The Basic Arc of the Story

As I’ve explained elsewhere, I think I now finally understand the Second Law of thermodynamics. But it’s a new understanding, and to get to it I’ve had to overcome a certain amount of conventional wisdom about the Second Law that I at least have long taken for granted. And to check myself I’ve been keen to know just where this conventional wisdom came from, how it’s been validated, and what might have made it go astray.

And from this I’ve been led into a rather detailed examination of the origins and history of thermodynamics. All in all, it’s a fascinating story, that both explains what’s been believed about thermodynamics, and provides some powerful examples of the complicated dynamics of the development and acceptance of ideas.

The basic concept of the Second Law was first formulated in the 1850s, and rather rapidly took on something close to its modern form. It began partly as an empirical law, and partly as something abstractly constructed on the basis of the idea of molecules, that nobody at the time knew for sure existed. But by the end of the 1800s, with the existence of molecules increasingly firmly established, the Second Law began to often be treated as an almost-mathematically-proven necessary law of physics. There were still mathematical loose ends, as well as issues such as its application to living systems and to systems involving gravity. But the almost-universal conventional wisdom became that the Second Law must always hold, and if it didn’t seem to in a particular case, then that must just be because there was something one didn’t yet understand about that case.

There was also a sense that regardless of its foundations, the Second Law was successfully used in practice. And indeed particularly in chemistry and engineering it’s often been in the background, justifying all the computations routinely done using entropy. But despite its ubiquitous appearance in textbooks, when it comes to foundational questions, there’s always been a certain air of mystery around the Second Law. Though after 150 years there’s typically an assumption that “somehow it must all have been worked out”. I myself have been interested in the Second Law now for a little more than 50 years, and over that time I’ve had a growing awareness that actually, no, it hasn’t all been worked out. Which is why, now, it’s wonderful to see the computational paradigm—and ideas from our Physics Project—after all these years be able to provide solid foundations for understanding the Second Law, as well as seeing its limitations.

And from the vantage point of the understanding we now have, we can go back and realize that there were precursors of it even from long ago. In some ways it’s all an inspiring tale—of how there were scientists with ideas ahead of their time, blocked only by the lack of a conceptual framework that would take another century to develop. But in other ways it’s also a cautionary tale, of how the forces of “conventional wisdom” can blind people to unanswered questions and—over a surprisingly long time—inhibit the development of new ideas.

But, first and foremost, the story of the Second Law is the story of a great intellectual achievement of the mid-19th century. It’s exciting now, of course, to be able to use the latest 21st-century ideas to take another step. But to appreciate how this fits in with what’s already known we have to go back and study the history of what originally led to the Second Law, and how what emerged as conventional wisdom about it took shape.

What Is Heat?

Once it became clear what heat is, it actually didn’t take long for the Second Law to be formulated. But for centuries—and indeed until the mid-1800s—there was all sorts of confusion about the nature of heat.

That there’s a distinction between hot and cold is a matter of basic human perception. And seeing fire one might imagine it as a disembodied form of heat. In ancient Greek times Heraclitus (~500 BC) talked about everything somehow being “made of fire”, and also somehow being intrinsically “in motion”. Democritus (~460–~370 BC) and the Epicureans had the important idea (that also arose independently in other cultures) that everything might be made of large numbers of a few types of tiny discrete atoms. They imagined these atoms moving around in the “void” of space. And when it came to heat, they seem to have correctly associated it with the motion of atoms—though they imagined it came from particular spherical “fire” atoms that could slide more quickly between other atoms, and they also thought that souls were the ultimate sources of motion and heat (at least in warm-blooded animals?), and were made of fire atoms.

And for two thousand years that’s pretty much where things stood. And indeed in 1623 Galileo (1564–1642) (in his book The Assayer, about weighing competing world theories) was still saying:

Those materials which produce heat in us and make us feel warmth, which are known by the general name of “fire,” would then be a multitude of minute particles having certain shapes and moving with certain velocities. Meeting with our bodies, they penetrate by means of their extreme subtlety, and their touch as felt by us when they pass through our substance is the sensation we call “heat.”

He goes on:

Since the presence of fire-corpuscles alone does not suffice to excite heat, but their motion is needed also, it seems to me that one may very reasonably say that motion is the cause of heat… But I hold it to be silly to accept that proposition in the ordinary way, as if a stone or piece of iron or a stick must heat up when moved. The rubbing together and friction of two hard bodies, either by resolving their parts into very subtle flying particles or by opening an exit for the tiny fire-corpuscles within, ultimately sets these in motion; and when they meet our bodies and penetrate them, our conscious mind feels those pleasant or unpleasant sensations which we have named heat…

And although he can tell there’s something different about it, he thinks of heat as effectively being associated with a substance or material:

The tenuous material which produces heat is even more subtle than that which causes odor, for the latter cannot leak through a glass container, whereas the material of heat makes its way through any substance.

In 1620, Francis Bacon (1561–1626) (in his “update on Aristotle”, The New Organon) says, a little more abstractly, if obscurely—and without any reference to atoms or substances:

[It is not] that heat generates motion or that motion generates heat (though both are true in certain cases), but that heat itself, its essence and quiddity, is motion and nothing else.

But real progress in understanding the nature of heat had to wait for more understanding about the nature of gases, with air being the prime example. (It was actually only in the 1640s that any kind of general notion of gas began to emerge—with the word “gas” being invented by the “anti-Galen” physician Jan Baptista van Helmont (1580–1644), as a Dutch rendering of the Greek word “chaos”, that meant essentially “void”, or primordial formlessness.) Ever since antiquity there’d been Aristotle-style explanations like “nature abhors a vacuum” about what nature “wants to do”. But by the mid-1600s the idea was emerging that there could be more explicit and mechanical explanations for phenomena in the natural world.

And in 1660 Robert Boyle (1627–1691)—now thoroughly committed to the experimental approach to science—published New Experiments Physico-mechanicall, Touching the Spring of the Air and its Effects in which he argued that air has an intrinsic pressure associated with it, which pushes it to fill spaces, and for which he effectively found Boyle’s Law PV = constant.

But what was air actually made of? Boyle had two basic hypotheses that he explained in rather flowery terms:

His first hypothesis was that air might be like a “fleece of wool” made of “aerial corpuscles” (gases were later often called “aeriform fluids”) with a “power or principle of self-dilatation” that resulted from there being “hairs” or “little springs” between these corpuscles. But he had a second hypothesis too—based, he said, on the ideas of “that most ingenious gentleman, Monsieur Descartes”: that instead air consists of “flexible particles” that are “so whirled around” that “each corpuscle endeavors to beat off all others”. In this second hypothesis, Boyle’s “spring of the air” was effectively the result of particles bouncing off each other.

And, as it happens, in 1668 there was quite an effort to understand the “laws of impact” (that would for example be applicable to balls in games like croquet and billiards, that had existed since at least the 1300s, and were becoming popular), with John Wallis (1616–1703), Christopher Wren (1632–1723) and Christiaan Huygens (1629–1695) all contributing, and Huygens producing diagrams like:

But while some understanding developed of what amount to impacts between pairs of hard spheres, there wasn’t the mathematical methodology—or probably the idea—to apply this to large collections of spheres.

Meanwhile, in his 1687 Principia Mathematica, Isaac Newton (1642–1727), wanting to analyze the properties of self-gravitating spheres of fluid, discussed the idea that fluids could in effect be made up of arrays of particles held apart by repulsive forces, as in Boyle’s first hypothesis. Newton had of course had great success with his 1/r2 universal attractive force for gravity. But now he noted (writing originally in Latin) that with a 1/r repulsive force between particles in a fluid, he could essentially reproduce Boyle’s law:

Newton discussed questions like whether one particle would “shield” others from the force, but then concluded:

But whether elastic fluids do really consist of particles so repelling each other, is a physical question. We have here demonstrated mathematically the property of fluids consisting of particles of this kind, that hence philosophers may take occasion to discuss that question.

Well, in fact, particularly given Newton’s authority, for well over a century people pretty much just assumed that this was how gases worked. There was one major exception, however, in 1738, when—as part of his eclectic mathematical career spanning probability theory, elasticity theory, biostatistics, economics and more—Daniel Bernoulli (1700–1782) published his book on hydrodynamics. Mostly he discusses incompressible fluids and their flow, but in one section he considers “elastic fluids”—and along with a whole variety of experimental results about atmospheric pressure in different places—draws the picture

and says

Let the space ECDF contain very small particles in rapid motion; as they strike against the piston EF and hold it up by their impact, they constitute an elastic fluid which expands as the weight P is removed or reduced; but if P is increased it becomes denser and presses on the horizontal case CD just as if it were endowed with no elastic property.

Then—in a direct and clear anticipation of the kinetic theory of heat—he goes on:

The pressure of the air is increased not only by reduction in volume but also by rise in temperature. As it is well known that heat is intensified as the internal motion of the particles increases, it follows that any increase in the pressure of air that has not changed its volume indicates more intense motion of its particles, which is in agreement with our hypothesis…

But at the time, and in fact for more than a century thereafter, this wasn’t followed up.

A large part of the reason seems to have been that people just assumed that heat ultimately had to have some kind of material existence; to think that it was merely a manifestation of microscopic motion was too abstract an idea. And then there was the observation of “radiant heat” (i.e. infrared radiation)—that seemed like it could only work by explicitly transferring some kind of “heat material” from one body to another.

But what was this “heat material”? It was thought of as a fluid—called caloric—that could suffuse matter, and for example flow from a hotter body to a colder. And in an echo of Democritus, it was often assumed that caloric consisted of particles that could slide between ordinary particles of matter. There was some thought that it might be related to the concept of phlogiston from the mid-1600s, that was effectively a chemical substance, for example participating in chemical reactions or being generated in combustion (through the “principle of fire”). But the more mainstream view was that there were caloric particles that would collect around ordinary particles of matter (often called “molecules”, after the use of that term by Descartes (1596–1650) in 1620), generating a repulsive force that would for example expand gases—and that in various circumstances these caloric particles would move around, corresponding to the transfer of heat.

To us today it might seem hacky and implausible (perhaps a little like dark matter, cosmological inflation, etc.), but the caloric theory lasted for more than two hundred years and managed to explain plenty of phenomena—and indeed was certainly going strong in 1825 when Laplace wrote his A Treatise of Celestial Mechanics, which included a successful computation of properties of gases like the speed of sound and the ratio of specific heats, on the basis of a somewhat elaborated and mathematicized version of caloric theory (that by then included the concept of “caloric rays” associated with radiant heat).

But even though it wasn’t understood what heat ultimately was, one could still measure its attributes. Already in antiquity there were devices that made use of heat to produce pressure or mechanical motion. And by the beginning of the 1600s—catalyzed by Galileo’s development of the thermoscope (in which heated liquid could be seen to expand up a tube)—the idea quickly caught on of making thermometers, and of quantitatively measuring temperature.

And given a measurement of temperature, one could correlate it with effects one saw. So, for example, in the late 1700s the French balloonist Jacques Charles (1746–1823) noted the linear increase of volume of a gas with temperature. Meanwhile, at the beginning of the 1800s Joseph Fourier (1768–1830) (science advisor to Napoleon) developed what became his 1822 Analytical Theory of Heat, and in it he begins by noting that:

Heat, like gravity, penetrates every substance of the universe, its rays occupy all parts of space. The object of our work is to set forth the mathematical laws which this element obeys. The theory of heat will hereafter form one of the most important branches of general physics.

Later he describes what he calls the “Principle of the Communication of Heat”. He refers to “molecules”—though basically just to indicate a small amount of substance—and says

When two molecules of the same solid are extremely near and at unequal temperatures, the most heated molecule communicates to that which is less heated a quantity of heat exactly expressed by the product of the duration of the instant, of the extremely small difference of the temperatures, and of certain function of the distance of the molecules.

then goes on to develop what’s now called the heat equation and all sorts of mathematics around it, all the while effectively adopting a caloric theory of heat. (And, yes, if you think of heat as a fluid it does lead you to describe its “motion” in terms of differential equations just like Fourier did. Though it’s then ironic that Bernoulli, even though he studied hydrodynamics, seemed to have a less “fluid-based” view of heat.)

Heat Engines and the Beginnings of Thermodynamics

At the beginning of the 1800s the Industrial Revolution was in full swing—driven in no small part by the availability of increasingly efficient steam engines. There had been precursors of steam engines even in antiquity, but it was only in 1712 that the first practical steam engine was developed. And after James Watt (1736–1819) produced a much more efficient version in 1776, the adoption of steam engines began to take off.

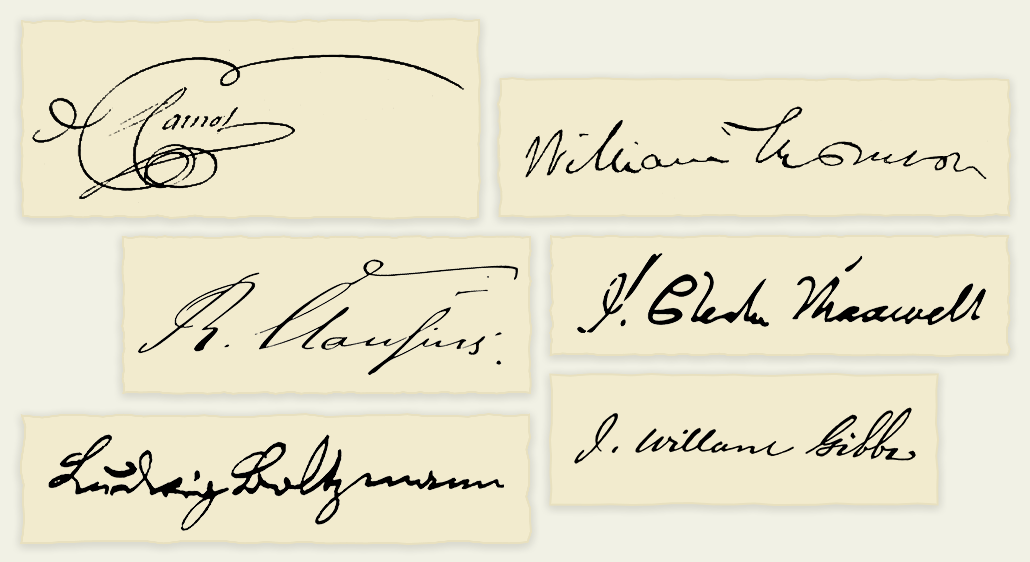

Over the years that followed there were all sorts of engineering innovations that increased the efficiency of steam engines. But it wasn’t clear how far it could go—and whether for example there was a limit to how much mechanical work could ever, even in principle, be derived from a given amount of heat. And it was the investigation of this question—in the hands of a young French engineer named Sadi Carnot (1796–1832)—that began the development of an abstract basic science of thermodynamics, and to the Second Law.

The story really begins with Sadi Carnot’s father, Lazare Carnot (1753–1823), who was trained as an engineer but ascended to the highest levels of French politics, and was involved with both the French Revolution and Napoleon. Particularly in years when he was out of political favor, Lazare Carnot worked on mathematics and mathematical engineering. His first significant work—in 1778—was entitled Memoir on the Theory of Machines. The mathematical and geometrical science of mechanics was by then fairly well developed; Lazare Carnot’s objective was to understand its consequences for actual engineering machines, and to somehow abstract general principles from the mechanical details of the operation of those machines. In 1803 (alongside works on the geometrical theory of fortifications) he published his Fundamental Principles of [Mechanical] Equilibrium and Movement, which argued for what was at one time called (in a strange foreshadowing of reversible thermodynamic processes) “Carnot’s Principle”: that useful work in a machine will be maximized if accelerations and shocks of moving parts are minimized—and that a machine with perpetual motion is impossible.

Sadi Carnot was born in 1796, and was largely educated by his father until he went to college in 1812. It’s notable that during the years when Sadi Carnot was a kid, one of his father’s activities was to give opinions on a whole range of inventions—including many steam engines and their generalizations. Lazare Carnot died in 1823. Sadi Carnot was by that point a well-educated but professionally undistinguished French military engineer. But in 1824, at the age of 28, he produced his one published work, Reflections on the Motive Power of Fire, and on Machines to Develop That Power (where by “fire” he meant what we would call heat):

The style and approach of the younger Carnot’s work is quite similar to his father’s. But the subject matter turned out to be more fruitful. The book begins:

Everyone knows that heat can produce motion. That it possesses vast motive-power none can doubt, in these days when the steam-engine is everywhere so well known… The study of these engines is of the greatest interest, their importance is enormous, their use is continually increasing, and they seem destined to produce a great revolution in the civilized world. Already the steam-engine works our mines, impels our ships, excavates our ports and our rivers, forges iron, fashions wood, grinds grain, spins and weaves our cloths, transports the heaviest burdens, etc. It appears that it must some day serve as a universal motor, and be substituted for animal power, water-falls, and air currents. …

Notwithstanding the work of all kinds done by steam-engines, notwithstanding the satisfactory condition to which they have been brought to-day, their theory is very little understood, and the attempts to improve them are still directed almost by chance. …

The question has often been raised whether the motive power of heat is unbounded, whether the possible improvements in steam-engines have an assignable limit, a limit which the nature of things will not allow to be passed by any means whatever; or whether, on the contrary, these improvements may be carried on indefinitely. We propose now to submit these questions to a deliberate examination.

Carnot operated very much within the framework of caloric theory, and indeed his ideas were crucially based on the concept that one could think about “heat itself” (which for him was caloric fluid), independent of the material substance (like steam) that was hot. But—like his father’s efforts with mechanical machines—his goal was to develop an abstract “metamodel” of something like a steam engine, crucially assuming that the generation of unbounded heat or mechanical work (i.e. perpetual motion) in the closed cycle of the operation of the machine was impossible, and noting (again with a reflection of his father’s work) that the system would necessarily maximize efficiency if it operated reversibly. And he then argued that:

The production of motive power is then due in steam-engines not to an actual consumption of caloric, but to its transportation from a warm body to a cold body, that is, to its re-establishment of equilibrium…

In other words, what was important about a steam engine was that it was a “heat engine”, that “moved heat around”. His book is mostly words, with just a few formulas related to the behavior of ideal gases, and some tables of actual parameters for particular materials. But even though his underlying conceptual framework—of caloric theory—was not correct, the abstract arguments that he made (that involved essentially logical consequences of reversibility and of operating in a closed cycle) were robust enough that it didn’t matter, and in particular he was able to successfully show that there was a theoretical maximum efficiency for a heat engine, that depended only on the temperatures of its hot and cold reservoirs of heat. But what’s important for our purposes here is that in the setup Carnot constructed he basically ended up introducing the Second Law.

At the time it appeared, however, Carnot’s book was basically ignored, and Carnot died in obscurity from cholera in 1832 (about 9 months after Évariste Galois (1811–1832)) at the age of 36. (The Sadi Carnot who would later become president of France was his nephew.) But in 1834, Émile Clapeyron (1799–1864)—a rather distinguished French engineering professor (and steam engine designer)—wrote a paper entitled “Memoir on the Motive Power of Heat”. He starts off by saying about Carnot’s book:

The idea which serves as a basis of his researches seems to me to be both fertile and beyond question; his demonstrations are founded on the absurdity of the possibility of creating motive power or heat out of nothing. …

This new method of demonstration seems to me worthy of the attention of theoreticians; it seems to me to be free of all objection …

I believe that it is of some interest to take up this theory again; S. Carnot, avoiding the use of mathematical analysis, arrives by a chain of difficult and elusive arguments at results which can be deduced easily from a more general law which I shall attempt to prove…

Clapeyron’s paper doesn’t live up to the claims of originality or rigor expressed here, but it served as a more accessible (both in terms of where it was published and how it was written) exposition of Carnot’s work, featuring, for example, for the first time a diagrammatic representation of a Carnot cycle

as well as notations like Q-for-heat that are still in use today:

The Second Law Is Formulated

One of the implications of Newton’s Laws of Motion is that momentum is conserved. But what else might also be conserved? In the 1680s Gottfried Leibniz (1646–1716) suggested the quantity m v2, which he called, rather grandly, vis viva—or, in English, “life force”. And yes, in things like elastic collisions, this quantity did seem to be conserved. But in plenty of situations it wasn’t. By 1807 the term “energy” had been introduced, but the question remained of whether it could in any sense globally be thought of as conserved.

It had seemed for a long time that heat was something a bit like mechanical energy, but the relation wasn’t clear—and the caloric theory of heat implied that caloric (i.e. the fluid corresponding to heat) was conserved, and so certainly wasn’t something that for example could be interconverted with mechanical energy. But in 1798 Benjamin Thompson (Count Rumford) (1753–1814) measured the heat produced by the mechanical process of boring a cannon, and began to make the argument that, in contradiction to the caloric theory, there was actually some kind of correspondence between mechanical energy and amount of heat.

It wasn’t a very accurate experiment, and it took until the 1840s—with new experiments by the English brewer and “amateur” scientist James Joule (1818–1889) and the German physician Robert Mayer (1814–1878)—before the idea of some kind of equivalence between heat and mechanical work began to look more plausible. And in 1847 this was something William Thomson (1824–1907) (later Lord Kelvin)—a prolific young physicist recently graduated from the Mathematical Tripos in Cambridge and now installed as a professor of “natural philosophy” (i.e. physics) in Glasgow—began to be curious about.

But first we have to go back a bit in the story. In 1845 Kelvin (as we’ll call him) had spent some time in Paris (primarily at at a lab that was measuring properties of steam for the French government), and there he’d learned about Carnot’s work from Clapeyron’s paper (at first he couldn’t get a copy of Carnot’s actual book). Meanwhile, one of the issues of the time was a proliferation of different temperature scales based on using different kinds of thermometers based on different substances. And in 1848 Kelvin realized that Carnot’s concept of a “pure heat engine”—assumed at the time to be based on caloric—could be used to define an “absolute” scale of temperature in which, for example, at absolute zero all caloric would have been removed from all substances:

Having found Carnot’s ideas useful, Kelvin in 1849 wrote a 33-page summary of them (small world that it was then, the immediately preceding paper in the journal is “On the Theory of Rolling Curves”, written by the then-17-year-old James Clerk Maxwell (1831–1879), while the one that follows is “Theoretical Considerations on the Effect of Pressure in Lowering the Freezing Point of Water” by James Thomson (1822–1892), engineering-oriented older brother of William):

He characterizes Carnot’s work as being based not so much on physics and experiment, but on the “strictest principles of philosophy”:

He doesn’t immediately mention “caloric” (though it does slip in later), referring instead to a vaguer concept of “thermal agency”:

In keeping with the idea that this is more philosophy than experimental science, he refers to “Carnot’s fundamental principle”—that after a complete cycle an engine can be treated as back in the “same state”—while adding the footnote that “this is tacitly assumed as an axiom”:

In actuality, to say that an engine comes back to the same state is a nontrivial statement of the existence of some kind of unique equilibrium in the system, related to the Second Law. But in 1848 Kelvin brushes this off by saying that the “axiom” has “never, so far as I am aware, been questioned by practical engineers”.

His next page is notable for the first-ever use of the term “thermo-dynamic” (then hyphenated) to discuss systems where what matters is “the dynamics of heat”:

That same page has a curious footnote presaging what will come, and making the statement that “no energy can be destroyed”, and considering it “perplexing” that this seems incompatible with Carnot’s work and its caloric theory framework:

After going through Carnot’s basic arguments, the paper ends with an appendix in which Kelvin basically says that even though the theory seems to just be based on a formal axiom, it should be experimentally tested:

He proceeds to give some tests, which he claims agree with Carnot’s results—and finally ends with a very practical (but probably not correct) table of theoretical efficiencies for steam engines of his day:

But now what of Joule’s and Mayer’s experiments, and their apparent disagreement with the caloric theory of heat? By 1849 a new idea had emerged: that perhaps heat was itself a form of energy, and that, when heat was accounted for, the total energy of a system would always be conserved. And what this suggested was that heat was somehow a dynamical phenomenon, associated with microscopic motion—which in turn suggested that gases might indeed consist just of molecules in motion.

And so it was that in 1850 Kelvin (then still “William Thomson”) wrote a long exposition “On the Dynamical Theory of Heat”, attempting to reconcile Carnot’s ideas with the new concept that heat was dynamical in origin:

He begins by quoting—presumably for some kind of “British-based authority”—an “anti-caloric” experiment apparently done by Humphry Davy (1778–1829) as a teenager, involving melting pieces of ice by rubbing them together, and included anonymously in a 1799 list of pieces of knowledge “principally from the west of England”:

But soon Kelvin is getting to the main point:

And then we have it: a statement of the Second Law (albeit with some hedging to which we’ll come back later):

And there’s immediately a footnote that basically asserts the “absurdity” of a Second-Law-violating perpetual motion machine:

But by the next page we find out that Kelvin admits he’s in some sense been “scooped”—by a certain Rudolf Clausius (1822–1888), who we’ll be discussing soon. But what’s remarkable is that Clausius’s “axiom” turns out to be exactly equivalent to Kelvin’s statement:

And what this suggests is that the underlying concept—the Second Law—is something quite robust. And indeed, as Kelvin implies, it’s the main thing that ultimately underlies Carnot’s results. And so even though Carnot is operating on the now-outmoded idea of caloric theory, his main results are still correct, because in the end all they really depend on is a certain amount of “logical structure”, together with the Second Law (and a version of the First Law, but that’s a slightly trickier story).

Kelvin recognized, though, that Carnot had chosen to look at the particular (“equilibrium thermodynamics”) case of processes that occur reversibly, effectively at an infinitesimal rate. And at the end of the first installment of his exposition, he explains that things will be more complicated if finite rates are considered—and that in particular the results one gets in such cases will depend on things like having a correct model for the nature of heat.

Kelvin’s exposition on the “dynamical nature of heat” runs to four installments, and the next two dive into detailed derivations and attempted comparison with experiment:

But before Kelvin gets to publish part four of his exposition he publishes two other pieces. In the first, he’s talking about sources of energy for human use (now that he believes energy is conserved):

He emphasizes that the Sun is—directly or indirectly—the main source of energy on Earth (later he’ll argue that coal will run out, etc.):

But he wonders how animals actually manage to produce mechanical work, noting that “the animal body does not act as a thermo-dynamic engine; and [it is] very probable that the chemical forces produce the external mechanical effects through electrical means”:

And then, by April 1852, he’s back to thinking directly about the Second Law, and he’s cut through the technicalities, and is stating the Second Law in everyday (if slightly ponderous) terms:

It’s interesting to see his apparently rather deeply held Presbyterian beliefs manifest themselves here in his mention that “Creative Power” is what must set the total energy of the universe. He ends his piece with:

In (2) the hedging is interesting. He makes the definitive assertion that what amounts to a violation of the Second Law “is impossible in inanimate material processes”. And he’s pretty sure the same is true for “vegetable life” (recognizing that in his previous paper he discussed the harvesting of sunlight by plants). But what about “animal life”, like us humans? Here he says that “by our will” we can’t violate the Second Law—so we can’t, for example, build a machine to do it. But he leaves it open whether we as humans might have some innate (“God-given”?) ability to overcome the Second Law.

And then there’s his (3). It’s worth realizing that his whole paper is less than 3 pages long, and right before his conclusions we’re seeing triple integrals:

So what is (3) about? It’s presumably something like a Second-Law-implies-heat-death-of-the-universe statement (but what’s this stuff about the past?)—but with an added twist that there’s something (God?) beyond the “known operations going on at present in the material world” that might be able to swoop in to save the world for us humans.

It doesn’t take people long to pick up on the “cosmic significance” of all this. But in the fall of 1852, Kelvin’s colleague, the Glasgow engineering professor William Rankine (1820–1872) (who was deeply involved with the First Law of thermodynamics), is writing about a way the universe might save itself:

After touting the increasingly solid evidence for energy conservation and the First Law

he goes on to talk about dissipation of energy and what we now call the Second Law

and the fact that it implies an “end of all physical phenomena”, i.e. heat death of the universe. He continues:

But now he offers a “ray of hope”. He believes that there must exist a “medium capable of transmitting light and heat”, i.e. an aether, “[between] the heavenly bodies”. And if this aether can’t itself acquire heat, he concludes that all energy must be converted into a radiant form:

Now he supposes that the universe is effectively a giant drop of aether, with nothing outside, so that all this radiant energy will get totally internally reflected from its surface, allowing the universe to “[reconcentrate] its physical energies, and [renew] its activity and life”—and save it from heat death:

He ends with the speculation that perhaps “some of the luminous objects which we see in distant regions of space may be, not stars, but foci in the interstellar aether”.

But independent of cosmic speculations, Kelvin himself continues to study the “dynamical theory of gases”. It’s often a bit unclear what’s being assumed. There’s the First Law (energy conservation). And the Second Law. But there’s also reversibility. Equilibrium. And the ideal gas law (P V = R T). But it soon becomes clear that that’s not always correct for real gases—as the Joule–Thomson effect demonstrates:

Kelvin soon returned to more cosmic speculations, suggesting that perhaps gravitation—rather than direct “Creative Power”—might “in reality [be] the ultimate created antecedent of all motion…”:

Not long after these papers Kelvin got involved with the practical “electrical” problem of laying a transatlantic telegraph cable, and in 1858 was on the ship that first succeeded in doing this. (His commercial efforts soon allowed him to buy a 126-ton yacht.) But he continued to write physics papers, which ranged over many different areas, occasionally touching thermodynamics, though most often in the service of answering a “general science” question—like how old the Sun is (he estimated 32,000 years from thermodynamic arguments, though of course without knowledge of nuclear reactions).

Kelvin’s ideas about the inevitable dissipation of “useful energy” spread quickly—by 1854, for example, finding their way into an eloquent public lecture by Hermann von Helmholtz (1821–1894). Helmholtz had trained as a doctor, becoming in 1843 a surgeon to a German military regiment. But he was also doing experiments and developing theories about “animal heat” and how muscles manage to “do mechanical work”, for example publishing an 1845 paper entitled “On Metabolism during Muscular Activity”. And in 1847 he was one of the inventors of the law of conservation of energy—and the First Law of thermodynamics—as well as perhaps its clearest expositor at the time (the word “force” in the title is what we now call “energy”):

By 1854 Helmholtz was a physiology professor, beginning a distinguished career in physics, psychophysics and physiology—and talking about the Second Law and its implications. He began his lecture by saying that “A new conquest of very general interest has been recently made by natural philosophy”—and what he’s referring to here is the Second Law:

Having discussed the inability of “automata” (he uses that word) to reproduce living systems, he starts talking about perpetual motion machines:

First he disposes of the idea that perpetual motion can be achieved by generating energy from nothing (i.e. violating the First Law), charmingly including the anecdote:

And then he’s on to talking about the Second Law

and discussing how it implies the heat death of the universe:

He notes, correctly, that the Second Law hasn’t been “proved”. But he’s impressed at how Kelvin was able to go from a “mathematical formula” to a global fact about the fate of the universe:

He ends the whole lecture quite poetically:

We’ve talked quite a bit about Kelvin and how his ideas spread. But let’s turn now to Rudolf Clausius, who in 1850 at least to some extent “scooped” Kelvin on the Second Law. At that time Clausius was a freshly minted German physics PhD. His thesis had been on an ingenious but ultimately incorrect theory of why the sky is blue. But he’d also worked on elasticity theory, and there he’d been led to start thinking about molecules and their configurations in materials. By 1850 caloric theory had become fairly elaborate, complete with concepts like “latent heat” (bound to molecules) and “free heat” (able to be transferred). Clausius’s experience in elasticity theory made him skeptical, and knowing Mayer’s and Joule’s results he decided to break with the caloric theory—writing his career-launching paper (translated from German in 1851, with Carnot’s puissance motrice [“motive power”] being rendered as “moving force”):

The first installment of the English version of the paper gives a clear description of the ideal gas laws and the Carnot cycle, having started from a statement of the “caloric-busting” First Law:

The general discussion continues in the second installment, but now there’s a critical side comment that describes the “general deportment of heat, which every-where exhibits the tendency to annul differences of temperature, and therefore to pass from a warmer body to a colder one”:

Clausius “has” the Second Law, as Carnot basically did before him. But when Kelvin quotes Clausius he does so much more forcefully:

But there it is: by 1852 the Second Law is out in the open, in at least two different forms. The path to reach it has been circuitous and quite technical. But in the end, stripped of its technical origins, the law seems somehow unsurprising and even obvious. For it’s a matter of common experience that heat flows from hotter bodies to colder ones, and that motion is dissipated by friction into heat. But the point is that it wasn’t until basically 1850 that the overall scientific framework existed to make it useful—or even really possible—to enunciate such observations as a formal scientific law.

Of course the fact that a law “seems true” based on common experience doesn’t mean it’ll always be true, and that there won’t be some special circumstance or elaborate construction that will evade it. But somehow the very fact that the Second Law had in a sense been “technically hard won”—yet in the end seemed so “obvious”—appears to have given it a sense of inevitability and certainty. And it didn’t hurt that somehow it seemed to have emerged from Carnot’s work, which had a certain air of “logical necessity”. (Of course, in reality, the Second Law entered Carnot’s logical structure as an “axiom”.) But all this helped set the stage for some of the curious confusions about the Second Law that would develop over the century that followed.

The Concept of Entropy

In the first half of the 1850s the Second Law had in a sense been presented in two ways. First, as an almost “footnote-style” assumption needed to support the “pure thermodynamics” that had grown out of Carnot’s work. And second, as an explicitly-stated-for-the-first-time—if “obvious”—“everyday” feature of nature, that was now realized as having potentially cosmic significance. But an important feature of the decade that followed was a certain progressive at-least-phenomenological “mathematicization” of the Second Law—pursued most notably by Rudolf Clausius.

In 1854 Clausius was already beginning this process. Perhaps confusingly, he refers to the Second Law as the “second fundamental theorem [Hauptsatz]” in the “mechanical theory of heat”—suggesting it’s something that is proved, even though it’s really introduced just as an empirical law of nature, or perhaps a theoretical axiom:

He starts off by discussing the “first fundamental theorem”, i.e. the First Law. And he emphasizes that this implies that there’s a quantity U (which we now call “internal energy”) that is a pure “function of state”—so that its value depends only on the state of a system, and not the path by which that state was reached. And as an “application” of this, he then points out that the overall change in U in a cyclic process (like the one executed by Carnot’s heat engine) must be zero.

And now he’s ready to tackle the Second Law. He gives a statement that at first seems somewhat convoluted:

But soon he’s deriving this from a more “everyday” statement of the Second Law (which, notably, is clearly not a “theorem” in any normal sense):

After giving a Carnot-style argument he’s then got a new statement (that he calls “the theorem of the equivalence of transformations”) of the Second Law:

And there it is: basically what we now call entropy (even with the same notation of Q for heat and T for temperature)—together with the statement that this quantity is a function of state, so that its differences are “independent of the nature of the process by which the transformation is effected”.

Pretty soon there’s a familiar expression for entropy change:

And by the next page he’s giving what he describes as “the analytical expression” of the Second Law, for the particular case of reversible cyclic processes:

A bit later he backs out of the assumption of reversibility, concluding that:

(And, yes, with modern mathematical rigor, that should be “non-negative” rather than “positive”.)

He goes on to say that if something has changed after going around a cycle, he’ll call that an “uncompensated transformation”—or what we would now refer to as an irreversible change. He lists a few possible (now very familiar) examples:

Earlier in his paper he’s careful to say that T is “a function of temperature”; he doesn’t say it’s actually the quantity we measure as temperature. But now he wants to determine what it is:

He doesn’t talk about the ultimately critical assumption (effectively the Zeroth Law of thermodynamics) that the system is “in equilibrium”, with a uniform temperature. But he uses an ideal gas as a kind of “standard material”, and determines that, yes, in that case T can be simply the absolute temperature.

So there it is: in 1854 Clausius has effectively defined entropy and described its relation to the Second Law, though everything is being done in a very “heat-engine” style. And pretty soon he’s writing about “Theory of the Steam-Engine” and filling actual approximate steam tables into his theoretical formulas:

After a few years “off” (working, as we’ll discuss later, on the kinetic theory of gases) Clausius is back in 1862 talking about the Second Law again, in terms of his “theorem of the equivalence of transformations”:

He’s slightly tightened up his 1854 discussion, but, more importantly, he’s now stating a result not just for reversible cyclic processes, but for general ones:

But what does this result really mean? Clausius claims that this “theorem admits of strict mathematical proof if we start from the fundamental proposition above quoted”—though it’s not particularly clear just what that proposition is. But then he says he wants to find a “physical cause”:

A little earlier in the paper he said:

So what does he think the “physical cause” is? He says that even from his first investigations he’d assumed a general law:

What are these “resistances”? He’s basically saying they are the forces between molecules in a material (which from his work on the kinetic theory of gases he now imagines exist):

He introduces what he calls the “disgregation” to represent the microscopic effect of adding heat:

For ideal gases things are straightforward, including the proportionality of “resistance” to absolute temperature. But in other cases, it’s not so clear what’s going on. A decade later he identifies “disgregation” with average kinetic energy per molecule—which is indeed proportional to absolute temperature. But in 1862 it’s all still quite muddy, with somewhat curious statements like:

And then the main part of the paper ends with what seems to be an anticipation of the Third Law of thermodynamics:

There’s an appendix entitled “On Terminology” which admits that between Clausius’s own work, and other people’s, it’s become rather difficult to follow what’s going on. He agrees that the term “energy” that Kelvin is using makes sense. He suggests “energy of the body” for what he calls U and we now call “internal energy”. He suggests “heat of the body” or “thermal content of the body” for Q. But then he talks about the fact that these are measured in thermal units (say the amount of heat needed to increase the temperature of water by 1°), while mechanical work is measured in units related to kilograms and meters. He proposes therefore to introduce the concept of “ergon” for “work measured in thermal units”:

And pretty soon he’s talking about the “interior ergon” and “exterior ergon”, as well as concepts like “ergonized heat”. (In later work he also tries to introduce the concept of “ergal” to go along with his development of what he called—in a name that did stick—the “virial theorem”.)

But in 1865 he has his biggest success in introducing a term. He’s writing a paper, he says, basically to clarify the Second Law, (or, as he calls it, “the second fundamental theorem”—rather confidently asserting that he will “prove this theorem”):

Part of the issue he’s trying to address is how the calculus is done:

The partial derivative symbol ∂ had been introduced in the late 1700s. He doesn’t use it, but he does introduce the now-standard-in-thermodynamics subscript notation for variables that are kept constant:

A little later, as part of the “notational cleanup”, we see the variable S:

And then—there it is—Clausius introduces the term “entropy”, “Greekifying” his concept of “transformation”:

His paper ends with his famous crisp statements of the First and Second Laws of thermodynamics—manifesting the parallelism he’s been claiming between energy and entropy:

The Kinetic Theory of Gases

We began above by discussing the history of the question of “What is heat?” Was it like a fluid—the caloric theory? Or was it something more dynamical, and in a sense more abstract? But then we saw how Carnot—followed by Kelvin and Clausius—managed in effect to sidestep the question, and come up with all sorts of “thermodynamic conclusions”, by talking just about “what heat does” without ever really having to seriously address the question of “what heat is”. But to be able to discuss the foundations of the Second Law—and what it says about heat—we have to know more about what heat actually is. And the crucial development that began to clarify the nature of heat was the kinetic theory of gases.

Central to the kinetic theory of gases is the idea that gases are made up of discrete molecules. And it’s important to remember that it wasn’t until the beginning of the 1900s that anyone knew for sure that molecules existed. Yes, something like them had been discussed ever since antiquity, and in the 1800s there was increasing “circumstantial evidence” for them. But nobody had directly “seen a molecule”, or been able, for example, until about 1870, to even guess what the size of molecules might be. Still, by the mid-1800s it had become common for physicists to talk and reason in terms of ordinary matter at least effectively being made of up molecules.

But if a gas was made of molecules bouncing off each other like billiard balls according to the laws of mechanics, what would its overall properties be? Daniel Bernoulli had in 1738 already worked out the basic answer that pressure would vary inversely with volume, or in his notation, π = P/s (and he even also gave formulas for molecules of nonzero size—in a precursor of van der Waals):

Results like Bernouilli’s would be rediscovered several times, for example in 1820 by John Herapath (1790–1868), a math teacher in England, who developed a fairly elaborate theory that purported to describe gravity as well as heat (but for example implied a P V = a T2 gas law):

Then there was the case of John Waterston (1811–1883), a naval instructor for the East India company, who in 1843 published a book called Thoughts on the Mental Functions, which included results on what he called the “vis viva theory of heat”—that he developed in more detail in a paper he wrote in 1846. But when he submitted the paper to the Royal Society it was rejected as “nonsense”, and its manuscript was “lost” until 1891 when it was finally published (with an “explanation” of the “delay”):

The paper had included a perfectly sensible mathematical analysis that included a derivation of the kinetic theory relation between pressure and mean-square molecular velocity:

But with all these pieces of work unknown, it fell to a German high-school chemistry teacher (and sometime professor and philosophical/theological writer) named August Krönig (1822–1879) to publish in 1856 yet another “rediscovery”, that he entitled “Principles of a Theory of Gases”. He said it was going to analyze the “mechanical theory of heat”, and once again he wanted to compute the pressure associated with colliding molecules. But to simplify the math, he assumed that molecules went only along the coordinate directions, at a fixed speed—almost anticipating a cellular automaton fluid:

What ultimately launched the subsequent development of the kinetic theory of gases, however, was the 1857 publication by Rudolf Clausius (by then an increasingly established German physics professor) of a paper entitled rather poetically “On the Nature of the Motion Which We Call Heat” (“Über die Art der Bewegung die wir Wärme nennen”):

It’s a clean and clear paper, with none of the mathematical muddiness around Clausius’s work on the Second Law (which, by the way, isn’t even mentioned in this paper even though Clausius had recently worked on it). Clausius figures out lots of the “obvious” implications of his molecular theory, outlining for example what happens in different phases of matter:

It takes him only a couple of pages of very light mathematics to derive the standard kinetic theory formula for the pressure of an ideal gas:

He’s implicitly assuming a certain randomness to the motions of the molecules, but he barely mentions it (and this particular formula is robust enough that average values are actually all that matter):

But having derived the formula for pressure, he goes on to use the ideal gas law to derive the relation between average molecular kinetic energy (which he still calls “vis viva”) and absolute temperature:

From this he can do things like work out the actual average velocities of molecules in different gases—which he does without any mention of the question of just how real or not molecules might be. By knowing experimental results about specific heats of gases he also manages to determine that not all the energy (“heat”) in a gas is associated with “translatory motion”: he realizes that for molecules involving several atoms there can be energy associated with other (as we would now say) internal degrees of freedom:

Clausius’s paper was widely read. And it didn’t take long before the Dutch meteorologist (and effectively founder of the World Meteorological Organization) Christophorus Buys Ballot (1817–1890) asked why—if molecules were moving as quickly as Clausius suggested—gases didn’t mix much more quickly than they’re observed to do:

Within a few months, Clausius published the answer: the molecules didn’t just keep moving in straight lines; they were constantly being deflected, to follow what we would now call a random walk. He invented the concept of a mean free path to describe how far on average a molecule goes before it hits another molecule:

As a capable theoretical physicist, Clausius quickly brings in the concept of probability

and is soon computing the average number of molecules which will survive undeflected for a certain distance:

Then he works out the mean free path λ (and it’s often still called λ):

And he concludes that actually there’s no conflict between rapid microscopic motion and large-scale “diffusive” motion:

Of course, he could have actually drawn a sample random walk, but drawing diagrams wasn’t his style. And in fact it seems as if the first published drawing of a random walk was something added by John Venn (1834–1923) in the 1888 edition of his Logic of Chance—and, interestingly, in alignment with my computational irreducibility concept from a century later he used the digits of π to generate his “randomness”:

In 1859, Clausius’s paper came to the attention of the then-28-year-old James Clerk Maxwell, who had grown up in Scotland, done the Mathematical Tripos in Cambridge, and was now back in Scotland as professor of “natural philosophy” at Aberdeen. Maxwell had already worked on things like elasticity theory, color vision, the mechanics of tops, the dynamics of the rings of Saturn and electromagnetism—having published his first paper (on geometry) at age 14. And, by the way, Maxwell was quite a “diagrammist”—and his early papers include all sorts of pictures that he drew:

But in 1859 Maxwell applied his talents to what he called the “dynamical theory of gases”:

He models molecules as hard spheres, and sets about computing the “statistical” results of their collisions:

And pretty soon he’s trying to compute distribution of their velocities:

It’s a somewhat unconvincing (or, as Maxwell himself later put it, “precarious”) derivation (how does it work in 1D, for example?), but somehow it manages to produce what’s now known as the Maxwell distribution:

Maxwell observes that the distribution is the same as for “errors … in the ‘method of least squares’”:

Maxwell didn’t get back to the dynamical theory of gases until 1866, but in the meantime he was making a “dynamical theory” of something else: what he called the electromagnetic field:

Even though he’d worked extensively with the inverse square law of gravity he didn’t like the idea of “action at a distance”, and for example he wanted magnetic field lines to have some underlying “material” manifestation

imagining that they might be associated with arrays of “molecular vortices”:

We now know, of course, that there isn’t this kind of “underlying mechanics” for the electromagnetic field. But—with shades of the story of Carnot—even though the underlying framework isn’t right, Maxwell successfully derives correct equations for the electromagnetic field—that are now known as Maxwell’s equations:

His statement of how the electromagnetic field “works” is highly reminiscent of the dynamical theory of gases:

But he quickly and correctly adds:

And a few sections later he derives the idea of general electromagnetic waves

noting that there’s no evidence that the medium through which he assumes they’re propagating has elasticity:

By the way, when it comes to gravity he can’t figure out how to make his idea of a “mechanical medium” work:

But in any case, after using it as an inspiration for thinking about electromagnetism, Maxwell in 1866 returns to the actual dynamical theory of gases, still feeling that he needs to justify looking at a molecular theory:

And now he gives a recognizable (and correct, so far as it goes) derivation of the Maxwell distribution:

He goes on to try to understand experimental results on gases, about things like diffusion, viscosity and conductivity. For some reason, Maxwell doesn’t want to think of molecules, as he did before, as hard spheres. And instead he imagines that they have “action at a distance” forces, which basically work like hard squares if it’s r-5 force law:

In the years that followed, Maxwell visited the dynamical theory of gases several more times. In 1871, a few years before he died at age 48, he wrote a textbook entitled Theory of Heat, which begins, in erudite fashion, discussing what “thermodynamics” should even be called:

Most of the book is concerned with the macroscopic “theory of heat”—though, as we’ll discuss later, in the very last chapter Maxwell does talk about the “molecular theory”, if in somewhat tentative terms.

“Deriving” the Second Law from Molecular Dynamics

The Second Law was in effect originally introduced as a formalization of everyday observations about heat. But the development of kinetic theory seemed to open up the possibility that the Second Law could actually be proved from the underlying mechanics of molecules. And this was something that Ludwig Boltzmann (1844–1906) embarked on towards the end of his physics PhD at the University of Vienna. In 1865 he’d published his first paper (“On the Movement of Electricity on Curved Surfaces”), and in 1866 he published his second paper, “On the Mechanical Meaning of the Second Law of Thermodynamics”:

The introduction promises “a purely analytical, perfectly general proof of the Second Law”. And what he seemed to imagine was that the equations of mechanics would somehow inevitably lead to motion that would reproduce the Second Law. And in a sense what computational irreducibility, rule 30, etc. now show is that in the end that’s indeed basically how things work. But the methods and conceptual framework that Boltzmann had at his disposal were very far away from being able to see that. And instead what Boltzmann did was to use standard mathematical methods from mechanics to compute average properties of cyclic mechanical motions—and then made the somewhat unconvincing claim that combinations of these averages could be related (e.g. via temperature as average kinetic energy) to “Clausius’s entropy”:

It’s not clear how much this paper was read, but in 1871 Boltzmann (now a professor of mathematical physics in Graz) published another paper entitled simply “On the Priority of Finding the Relationship between the Second Law of Thermodynamics and the Principle of Least Action” that claimed (with some justification) that Clausius’s then-newly-announced virial theorem was already contained in Boltzmann’s 1866 paper.

But back in 1868—instead of trying to get all the way to Clausius’s entropy—Boltzmann instead uses mechanics to get a generalization of Maxwell’s law for the distribution of molecular velocities. His paper “Studies on the Equilibrium of [Kinetic Energy] between [Point Masses] in Motion” opens by saying that while analytical mechanics has in effect successfully studied the evolution of mechanical systems “from a given state to another”, it’s had little to say about what happens when such systems “have been left moving on their own for a long time”. He intends to remedy that, and spends 47 pages—complete with elaborate diagrams and formulas about collisions between hard spheres—in deriving an exponential distribution of energies if one assumes “equilibrium” (or, more specifically, balance between forward and backward processes):

It’s notable that one of the mathematical approaches Boltzmann uses is to discretize (i.e. effectively quantize) things, then look at the “combinatorial” limit. (Based on his later statements, he didn’t want to trust “purely continuous” mathematics—at least in the context of discrete molecular processes—and wanted to explicitly “watch the limits happening”.) But in the end it’s not clear that Boltzmann’s 1868 arguments do more than the few-line functional-equation approach that Maxwell had already used. (Maxwell would later complain about Boltzmann’s “overly long” arguments.)

Boltzmann’s 1868 paper had derived what the distribution of molecular energies should be “in equilibrium”. (In 1871 he was talking about “equipartition” not just of kinetic energy, but also of energies associated with “internal motion” of polyatomic molecules.) But what about the approach to equilibrium? How would an initial distribution of molecular energies evolve over time? And would it always end up at the exponential (“Maxwell–Boltzmann”) distribution? These are questions deeply related to a microscopic understanding of the Second Law. And they’re what Boltzmann addressed in 1872 in his 22nd published paper “Further Studies on the Thermal Equilibrium of Gas Molecules”:

Boltzmann explains that:

Maxwell already found the value Av2 e–Bv2 [for the distribution of velocities] … so that the probability of different velocities is given by a formula similar to that for the probability of different errors of observation in the theory of the method of least squares. The first proof which Maxwell gave for this formula was recognized to be incorrect even by himself. He later gave a very elegant proof that, if the above distribution has once been established, it will not be changed by collisions. He also tries to prove that it is the only velocity distribution that has this property. But the latter proof appears to me to contain a false inference. It has still not yet been proved that, whatever the initial state of the gas may be, it must always approach the limit found by Maxwell. It is possible that there may be other possible limits. This proof is easily obtained, however, by the method which I am about to explain…

(He gives a long footnote explaining why Maxwell might be wrong, talking about how a sequence of collisions might lead to a “cycle of velocity states”—which Maxwell hasn’t proved will be traversed with equal probability in each direction. Ironically, this is actually already an analog of where things are going to go wrong with Boltzmann’s own argument.)

The main idea of Boltzmann’s paper is not to assume equilibrium, but instead to write down an equation (now called the Boltzmann Transport Equation) that explicitly describes how the velocity (or energy) distribution of molecules will change as a result of collisions. He begins by defining infinitesimal changes in time:

He then goes through a rather elaborate analysis of velocities before and after collisions, and how to integrate over them, and eventually winds up with a partial differential equation for the time variation of the energy distribution (yes, he confusingly uses x to denote energy)—and argues that Maxwell’s exponential distribution is a stationary solution to this equation:

A few paragraphs further on, something important happens: Boltzmann introduces a function that here he calls E, though later he’ll call it H:

Ten pages of computation follow

and finally Boltzmann gets his main result: if the velocity distribution evolves according to his equation, H can never increase with time, becoming zero for the Maxwell distribution. In other words, he is saying that he’s proved that a gas will always (“monotonically”) approach equilibrium—which seems awfully like some kind of microscopic proof of the Second Law.

But then Boltzmann makes a bolder claim:

It has thus been rigorously proved that, whatever the initial distribution of kinetic energy may be, in the course of a very long time it must always necessarily approach the one found by Maxwell. The procedure used so far is of course nothing more than a mathematical artifice employed in order to give a rigorous proof of a theorem whose exact proof has not previously been found. It gains meaning by its applicability to the theory of polyatomic gas molecules. There one can again prove that a certain quantity E can only decrease as a consequence of molecular motion, or in a limiting case can remain constant. One can also prove that for the atomic motion of a system of arbitrarily many material points there always exists a certain quantity which, in consequence of any atomic motion, cannot increase, and this quantity agrees up to a constant factor with the value found for the well-known integral ∫dQ/T in my [1871] paper on the “Analytical proof of the 2nd law, etc.”. We have therefore prepared the way for an analytical proof of the Second Law in a completely different way from those previously investigated. Up to now the object has been to show that ∫dQ/T = 0 for reversible cyclic processes, but it has not been proved analytically that this quantity is always negative for irreversible processes, which are the only ones that occur in nature. The reversible cyclic process is only an ideal, which one can more or less closely approach but never completely attain. Here, however, we have succeeded in showing that ∫dQ/T is in general negative, and is equal to zero only for the limiting case, which is of course the reversible cyclic process (since if one can go through the process in either direction, ∫dQ/T cannot be negative).

In other words, he’s saying that the quantity H that he’s defined microscopically in terms of velocity distributions can be identified (up to a sign) with the entropy that Clausius defined as dQ/T. He says that he’ll show this in the context of analyzing the mechanics of polyatomic molecules.

But first he’s going to take a break and show that his derivation doesn’t need to assume continuity. In a pre-quantum-mechanics pre-cellular-automaton-fluid kind of way he replaces all the integrals by limits of sums of discrete quantities (i.e. he’s quantizing kinetic energy, etc.):

He says that this discrete approach makes everything clearer, and quotes Lagrange’s derivation of vibrations of a string as an example of where this has happened before. But then he argues that everything works out fine with the discrete approach, and that H still decreases, with the Maxwell distribution as the only possible end point. As an aside, he makes a jab at Maxwell’s derivation, pointing out that with Maxwell’s functional equation:

… there are infinitely many other solutions, which are not useful however since ƒ(x) comes out negative or imaginary for some values of x. Hence, it follows very clearly that Maxwell’s attempt to prove a priori that his solution is the only one must fail, since it is not the only one but rather it is the only one that gives purely positive probabilities, and therefore the only useful one.

But finally—after another aside about computing thermal conductivities of gases—Boltzmann digs into polyatomic molecules, and his claim about H being related to entropy. There’s another 26 pages of calculations, and then we get to a section entitled “Solution of Equation (81) and Calculation of Entropy”. More pages of calculation about polyatomic molecules ensue. But finally we’re computing H, and, yes, it agrees with the Clausius result—but anticlimactically he’s only dealing with the case of equilibrium for monatomic molecules, where we already knew we got the Maxwell distribution:

And now he decides he’s not talking about polyatomic molecules anymore, and instead:

In order to find the relation of the quantity [H] to the second law of thermodynamics in the form ∫dQ/T < 0, we shall interpret the system of mass points not, as previously, as a gas molecule, but rather as an entire body.

But then, in the last couple of pages of his paper, Boltzmann pulls out another idea. He’s discussed the concept that polyatomic molecules (or, now, whole systems) can be in many different configurations, or “phases”. But now he says: “We shall replace [our] single system by a large number of equivalent systems distributed over many different phases, but which do not interact with each other”. In other words, he’s introducing the idea of an ensemble of states of a system. And now he says that instead of looking at the distribution just for a single velocity, we should do it for all velocities, i.e. for the whole “phase” of the system.

[These distributions] may be discontinuous, so that they have large values when the variables are very close to certain values determined by one or more equations, and otherwise vanishingly small. We may choose these equations to be those that characterize visible external motion of the body and the kinetic energy contained in it. In this connection it should be noted that the kinetic energy of visible motion corresponds to such a large deviation from the final equilibrium distribution of kinetic energy

that it leads to an infinity in H, so that from the point of view of the Second Law of thermodynamics it acts like heat supplied from an infinite temperature.

There are a bunch of ideas swirling around here. Phase-space density (cf. Liouville’s equation). Coarse-grained variables. Microscopic representation of mechanical work. Etc. But the paper is ending. There’s a discussion about H for systems that interact, and how there’s an equilibrium value achieved. And finally there’s a formula for entropy

that Boltzmann said “agrees … with the expression I found in my previous [1871] paper”.

So what exactly did Boltzmann really do in his 1872 paper? He introduced the Boltzmann Transport Equation which allows one to compute at least certain non-equilibrium properties of gases. But is his ƒ log ƒ quantity really what we can call “entropy” in the sense Clausius meant? And is it true that he’s proved that entropy (even in his sense) increases? A century and a half later there’s still a remarkable level of confusion around both these issues.

But in any case, back in 1872 Boltzmann’s “minimum theorem” (now called his “H theorem”) created quite a stir. But after some time there was an objection raised, which we’ll discuss below. And partly in response to this, Boltzmann (after spending time working on microscopic models of electrical properties of materials—as well as doing some actual experiments) wrote another major paper on entropy and the Second Law in 1877:

The translated title of the paper is “On the Relation between the Second Law of Thermodynamics and Probability Theory with Respect to the Laws of Thermal Equilibrium”. And at the very beginning of the paper Boltzmann makes a statement that was pivotal for future discussions of the Second Law: he says it’s now clear to him that an “analytical proof” of the Second Law is “only possible on the basis of probability calculations”. Now that we know about computational irreducibility and its implications one could say that this was the point where Boltzmann and those who followed him went off track in understanding the true foundations of the Second Law. But Boltzmann’s idea of introducing probability theory was effectively what launched statistical mechanics, with all its rich and varied consequences.

Boltzmann makes his basic claim early in the paper

with the statement (quoting from a comment in a paper he’d written earlier the same year) that “it is clear” (always a dangerous thing to say!) that in thermal equilibrium all possible states of the system—say, spatially uniform and nonuniform alike—are equally probable

… comparable to the situation in the game of Lotto where every single quintet is as improbable as the quintet 12345. The higher probability that the state distribution becomes uniform with time arises only because there are far more uniform than nonuniform state distributions…

He goes on:

[Thus] it is possible to calculate the thermal equilibrium state by finding the probability of the different possible states of the system. The initial state will in most cases be highly improbable but from it the system will always rapidly approach a more probable state until it finally reaches the most probable state, i.e., that of thermal equilibrium. If we apply this to the Second Law we will be able to identify the quantity which is usually called entropy with the probability of the particular state…

He’s talked about thermal equilibrium, even in the title, but now he says:

… our main purpose here is not to limit ourselves to thermal equilibrium, but to explore the relationship of the probabilistic formulation to the [Second Law].

He says his goal is to calculate probability distribution for different states, and he’ll start with

as simple a case as possible, namely a gas of rigid absolutely elastic spherical molecules trapped in a container with absolutely elastic walls. (Which interact with central forces only within a certain small distance, but not otherwise; the latter assumption, which includes the former as a special case, does not change the calculations in the least).

In other words, yet again he’s going to look at hard sphere gases. But, he says:

Even in this case, the application of probability theory is not easy. The number of molecules is not infinite, in a mathematical sense, yet the number of velocities each molecule is capable of is effectively infinite. Given this last condition, the calculations are very difficult; to facilitate understanding, I will, as in earlier work, consider a limiting case.

And this is where he “goes discrete” again—allowing (“cellular-automaton-style”) only discrete possible velocities for each molecule:

He says that upon colliding, two molecules can exchange these discrete velocities, but nothing more. As he explains, though:

Even if, at first sight, this seems a very abstract way of treating the problem, it rapidly leads to the desired objective, and when you consider that in nature all infinities are but limiting cases, one assumes each molecule can behave in this fashion only in the limiting case where each molecule can assume more and more values of the velocity.

But now—much like in an earlier paper—he makes things even simpler, saying he’s going to ignore velocities for now, and just say that the possible energies of molecules are “in an arithmetic progression”:

He plans to look at collisions, but first he just wants to consider the combinatorial problem of distributing these energies among n molecules in all possible ways, subject to the constraint of having a certain fixed total energy. He sets up a specific example, with 7 molecules, total energy 7, and maximum energy per molecule 7—then gives an explicit table of all possible states (up to, as he puts it, “immaterial permutations of molecular labels”):

Tables like this had been common for nearly two centuries in combinatorial mathematics books like Jacob Bernoulli’s (1655–1705) Ars Conjectandi

but this might have been the first place such a table had appeared in a paper about fundamental physics.

And now Boltzmann goes into an analysis of the distribution of states—of the kind that’s now long been standard in textbooks of statistical physics, but will then have been quite unfamiliar to the pure-calculus-based physicists of the time:

He derives the average energy per molecule, as well as the fluctuations:

He says that “of course” the real interest is in the limit of an infinite number of molecules, but he still wants to show that for “moderate values” the formulas remain quite accurate. And then (even without Wolfram Language!) he’s off finding (using Newton’s method it seems) approximate roots of the necessary polynomials:

Just to show how it all works, he considers a slightly larger case as well:

Now he’s computing the probability that a given molecule has a particular energy

and determining that in the limit it’s an exponential

that is, as he says, “consistent with that known from gases in thermal equilibrium”.

He claims that in order to really get a “mechanical theory of heat” it’s necessary to take a continuum limit. And here he concludes that thermal equilibrium is achieved by maximizing the quantity Ω (where the “l” stands for log, so this is basically ƒ log ƒ):

He explains that Ω is basically the log of the number of possible permutations, and that it’s “of special importance”, and he’ll call it the “permutability measure”. He immediately notes that “the total permutability measure of two bodies is equal to the sum of the permutability measures of each body”. (Note that Boltzmann’s Ω isn’t the modern total-number-of-states Ω; confusingly, that’s essentially the exponential of Boltzmann’s Ω.)

He goes through some discussion of how to handle extra degrees of freedom in polyatomic molecules, but then he’s on to the main event: arguing that Ω is (essentially) the entropy. It doesn’t take long:

Basically he just says that in equilibrium the probability ƒ(…) for a molecule to have a particular velocity is given by the Maxwell distribution, then he substitutes this into the formula for Ω, and shows that indeed, up to a constant, Ω is exactly the “Clausius entropy” ∫dQ/T.

So, yes, in equilibrium Ω seems to be giving the entropy. But then Boltzmann makes a bit of a jump. He says that in processes that aren’t reversible both “Clausius entropy” and Ω will increase, and can still be identified—and enunciates the general principle, printed in his paper in special doubled-spaced form:

… [In] any system of bodies that undergoes state changes … even if the initial and final states are not in thermal equilibrium … the total permutability measure for the bodies will continually increase during the state changes, and can remain constant only so long as all the bodies during the state changes remain infinitely close to thermal equilibrium (reversible state changes).

In other words, he’s asserting that Ω behaves the same way entropy is said to behave according to the Second Law. He gives various thought experiments about gases in boxes with dividers, gases under gravity, etc. And finally concludes that, yes, the relationship of entropy to Ω “applies to the general case”.

There’s one final paragraph in the paper, though: