A New Kind of Notebook

We originally invented the concept of “Notebooks” back in 1987, for Version 1.0 of Mathematica. And over the past 36 years, Notebooks have proved to be an incredibly convenient medium in which to do—and publish—work (and indeed, I, for example, have created hundreds of thousands of them). And, yes, eventually the basic concepts of Notebooks were widely copied—though still not even with everything we had back in 1987!

Well, now there’s a new challenge and opportunity for Notebooks: integrating LLM functionality into them. It’s an interesting design problem, and I’m pretty pleased with what we’ve come up with. And today we’re introducing Chat Notebooks as a new kind of Notebook that supports LLM-based chat functionality.

The functionality described here will be built into the upcoming version of Wolfram Language (Version 13.3). To install it in the now-current version (Version 13.2), use

and

You will also need an API key for the OpenAI LLM or another LLM.

Just as with ordinary Notebooks, there are many ways to use Chat Notebooks. One that I’m particularly excited about—especially because of its potential to open up computational language to so many people—is for providing interactive Wolfram Language assistance. But I’ll talk about that separately. And instead here I’ll concentrate on the (already very rich) general concept of Chat Notebooks.

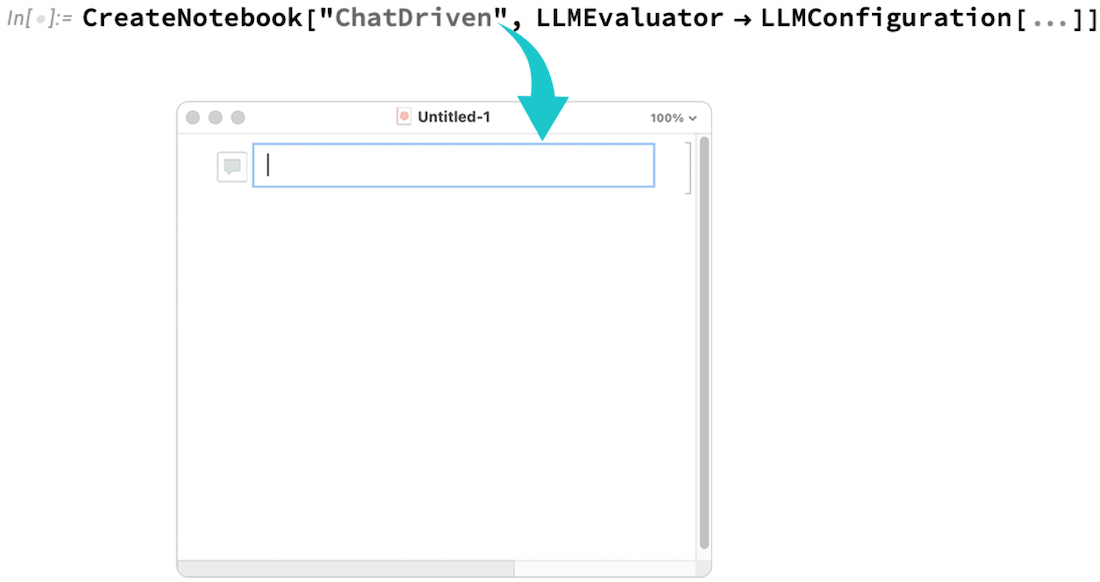

The basic idea is simple: there’s a new kind of cell—a chat cell—that communicates with an LLM. (In what we’re calling “Chat-Driven Notebooks” chat cells are the default; in “Chat-Enabled Notebooks” you get a chat cell by pressing '—i.e. quote—when you first create the cell.)

|

|

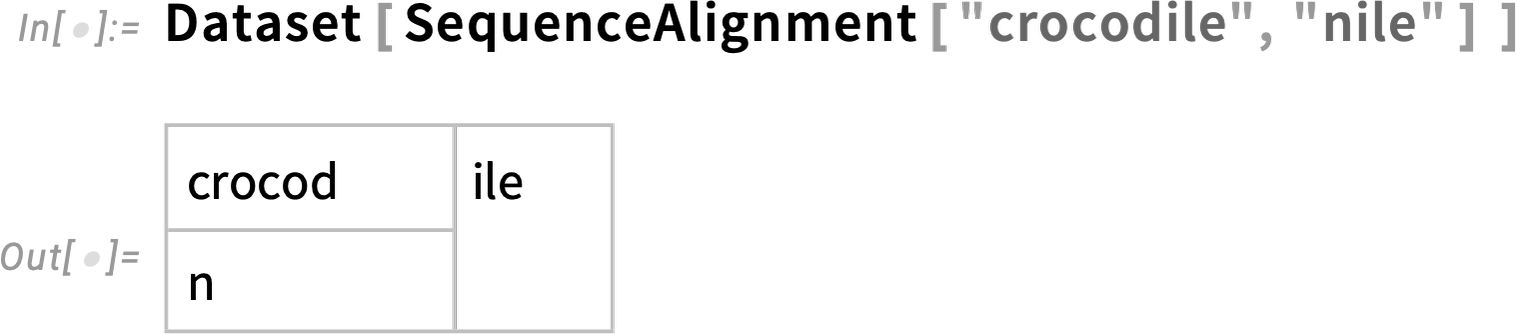

In a standard Notebook, we’re used to having input cells containing Wolfram Language, together with output cells that give the results from evaluating that Wolfram Language input:

And at a basic level, a chat cell is just a type of cell that uses an LLM—rather than the Wolfram Language kernel—to “evaluate” its output. And indeed, in a Chat Notebook, the way you send your input to the LLM is to press shiftenter, just like for Wolfram Language input.

And just like for standard input and output cells, chat input and output cells are grouped, so you can select them together, or double-click one of them to open or close them:

One immediate difference with chat cells is that whereas an ordinary output cell is produced all at once, the contents of a chat output cell progressively “stream in” a word (or so) at a time, as the LLM generates it.

There’s another important difference too. In an ordinary Notebook there’s a “temporal thread of evaluation” in which inputs and outputs appear in the sequence they’re generated in time (as indicated by In[n] and Out[n], quite independent of where they are placed in the Notebook. Thus, for example, if you evaluate x = 5 in an input cell, then subsequently ask for the value of x, the result will be 5 wherever in the notebook you ask—even if it’s above the “x = 5” cell:

But with chat cells it’s a different story. Now the order of cells in the Notebook matters. The “thread of a chat” is determined not by when chat cells were evaluated, but instead by the order in which they appear in the notebook.

Going down the notebook, successive chat cells are “aware” of what’s in cells above them. But even if we add it later, a cell placed at the top won’t “know about” anything in cells below it.

All of this reflects an important difference between ordinary Wolfram Language evaluation and “LLM evaluation”. In Wolfram Language evaluation, the Wolfram Language kernel always has an internal state, and whatever you do in the notebook is in a sense merely a window into that state. But for LLM evaluation the whole state is determined by the actual content of the notebook.

And each time you do an LLM evaluation, the Notebook system will package up all the content above the cell in which you’re doing the evaluation, and send it to the LLM. The LLM in a sense never knows anything about time history; all it knows is what’s in the notebook when the LLM evaluation is done.

There are many consequences of this. One is that you can edit the chat history and “reevaluate with new history”. When the reevaluation “overwrites” a cell, the Chat Notebook will maintain the older version, and you can get back to it by pressing the ◄► arrows (for purposes of LLM evaluation, the chat history is always considered to be what’s showing when you do the evaluation):

|

|

LLM evaluations pick up content that appears above them in the notebook. But there’s an important way to limit this, and to separate or “modularize” chats: the idea of a chatblock.

You can begin a chatblock by pressing ~ (tilde) when you create a cell in the Notebook you’ll then get:

![]()

And the point is that this indicates the beginning of a new chat. When you evaluate a chat cell below this separator, it’ll only use content up to the separator—so that means that in a single Chat Notebook, you can have any number of independent “chat sessions”, delimited by chatblock separators:

In a sense LLM evaluation is a very Notebook-centric form of evaluation, always based on the sequence of content that appears in the Notebook. As we’ll discuss below, there are different detailed forms of LLM evaluation, but in most cases the evaluation will operate not just on chat cells, but on all cells that appear above it in a given chatblock.

Another difference from ordinary evaluation is that LLM evaluation can often not be repeatable. Yes, if there are random numbers, or external inputs, ordinary Wolfram Language evaluation may not be repeatable. But the core evaluation process in Wolfram Language is completely repeatable. In an LLM, however, that may not be the case. For example, particularly if the LLM is operated with a nonzero value of its “temperature” parameter (which is usually the default), it’s pretty much guaranteed to give different results every time an evaluation is done.

And in using Chat Notebooks, it’ll sometimes be convenient to just try to evaluate a chat cell multiple times until you get what you want. (You can go between different choices using

The default in Chat Notebooks is always to use previous cells as “context” for any chat input you provide. But there’s also a mechanism for having “side chats” that don’t use (or affect) context. Instead of just typing to get a chat cell (in a Chat-Driven Notebook), start the cell with ' (“quote”) to get a “side chat” cell (in a Chat-Enabled Notebook, it’s ' to get a chat cell, and '' to get a side chat cell):

Who Are You Gonna Talk To?

When you evaluate a chat cell, you’ll get a response from an LLM. But what determines the “persona” that’ll be used for the LLM—or in general how the LLM is configured? There are multiple levels at which this can be specified—from overall Preference settings to Chat Notebook settings to chatblocks to individual chat cells.

For a chatblock, for example, click the little chat icon to the left and you’ll see a menu of possible personas:

Pick a particular persona and its icon will show up “perched” on the chatblock separator—and then in every chat cell that follows it’ll be that persona that by default responds to you:

You can tell it’s that persona responding because its icon will show up as the “label” for the response. (It’ll also appear next to your input if you hover over the chat cell icon).

We mentioned in passing above that when there are two kinds of Chat Notebooks you can create (e.g. with the File > New menu): Chat-Enabled Notebooks and Chat-Driven Notebooks. In future versions of the Wolfram Notebook system, Chat-Enabled Notebooks will probably be the standard default for all new notebooks, but for now it’s something you have to explicitly choose.

So what is a Chat-Enabled Notebook, and how is it different from a Chat-Driven Notebook? The basic point is that a Chat-Enabled Notebook is intended to be used just like Wolfram Notebooks have been used for 35 years—but with additional chat capabilities added. In a Chat-Enabled Notebook the default new cell type (assuming you’re using the default stylesheet) expects Wolfram Language input. To get a chat cell, you explicitly type ' (“quote”) at the beginning of the cell. And when you make that chat cell, it’ll by default be talking to the Code Assistant persona, ready to help you with generating Wolfram Language code.

A Chat-Driven Notebook is something different: it’s a notebook where chats are the primary content—and by default new cells are always chat cells, and there’s no particular expectation that you’ll be talking about things to do with Wolfram Language. There’s no special persona by default in Chat-Driven Notebook, and instead one’s pretty much just talking to the generic LLM (though there’s some additional prompting about being used in a Notebook, etc.)

If you want to talk to another persona, though, you can specify that in the menu of personas. There are a few personas listed by default. But there are many more in the Wolfram Prompt Repository. And from Add & Manage Personas you can open the personas section of the Prompt Repository:

Then you can go to a persona page, and press the Install button to install that persona in your session:

Now you’ll be able to select this persona from any chatblock, chat cell, etc. persona menu:

The Wolfram Prompt Repository contains a growing selection of curated contributed personas. But the Install from URL menu item also lets you install personas that have been independently deployed (for example in the Wolfram Cloud), and are available either publicly or for specific users. (As discussed elsewhere, you can create personas using a Prompt Resource Definition Notebook.)

Given a named persona—either defined in the Wolfram Prompt Repository, or that you’ve explicitly installed—you can always “direct chat” that persona in a particular chat cell by using @persona:

When you direct chat a persona in a particular chat cell, that persona gets sent the whole previous history in your current chatblock. But after that persona has responded, subsequent chat cells revert to using the current default persona. Nevertheless, any persona that you direct chat will automatically get installed in the list of personas you can use. Note, by the way, that direct chatting is an independent idea from side chats. Side chats don’t by default affect what persona you’re talking to, but give you a “localized” context, while direct chats affect the persona you’re talking to, but are “flowed into” the global history of the current chatblock.

Personas let you define all sorts of aspects of how you want an LLM to behave. But ultimately you also have to define the underlying LLM itself. What model should it use? With what “temperature”? etc. At the bottom of the same menus that list personas there’s an Advanced Settings item:

Like personas, these can be set at a chat cell level, chatblock level, notebook level—or globally, through Preferences settings. It’s typical to define things like authentication at the Preferences level. And ultimately everything about the configuration of an LLM is specified by a symbolic LLMConfiguration object.

As we’ll discuss elsewhere, a very important additional feature of full LLMConfiguration objects is that they can specify “tools” that should be available to an LLM—essentially Wolfram Language APIs that the LLM should be able to send requests to in order to get computational results or computational actions.

Within a particular Wolfram Language session, you can specify a default LLM configuration by setting the value of $LLMEvaluator. You can also programmatically create a Chat Notebook with a specified LLM configuration using:

(This will make a chat-driven notebook; you can use "ChatEnabled" to make a chat-enabled notebook.)

Applying Functions in a Chat Notebook

As we discussed elsewhere, personas are ultimately just prompts. So when, for example, we say @Yoda we’re really just adding the “Yoda prompt” (i.e. LLMPrompt["Yoda"]) into a chat evaluation.

But there are all sorts of prompts that don’t correspond to what we’d normally think of as personas. For example, there are “modifier prompts”, like Emojified or SEOptimize or TweetConvert, that describe particular output we want to get. And in a Chat Notebook, we can add such modifier prompts just using #prompt:

The reason this works is that “under the hood”, a chat evaluation is effectively LLMSynthesize["input"], and adding either a persona or a modifier prompt is achieved with LLMSynthesize[LLMPrompt[ … ]].

You can add more than one modifier prompt just by putting in multiple # items. But what if a modifier prompt has a “parameter”, like in the case of "LLMPrompt["Translated", "French"]"? Chat Notebooks provide a syntax for that, with each parameter separated by |, as in #prompt|parameter:

Personas and modifiers are both intended to affect the output generated by an LLM in a chat. But the Wolfram Prompt Repository also contains “function prompts”, that are intended to operate on a specific piece of input you give. Function prompts are particularly suitable for programmatic use, as in:

But it’s also possible to use function prompts in Chat Notebooks. !prompt specifies a function prompt:

By default, a function prompt in a chat cell takes as its input the text you explicitly give in the chat cell (though it still “sees” previous history in the current chatblock.) But it’s also common to want to put the input in a cell of its own. You can make a function prompt take its input from the previous cell in the notebook by using !prompt^:

But what if you want to feed the complete history (in the current chatblock) to a function prompt? You can do that by using ^^ instead of ^:

And, yes, there are a lot of little notations in Chat Notebooks. One gets used to them quickly, but here—for convenience—are all of them collected in a table:

The Design of Chat Notebooks

One of the great long-term strengths of the Wolfram Language is the coherence of its design. And that design coherence extends not only across the language itself, but also to the whole system around the language, including Wolfram Notebooks. So what about Chat Notebooks? As we said above, Chat Notebooks represent a new kind of Notebook—that have new kinds of requirements, and bring new design challenges. But as has happened so many times before, the whole Wolfram Language and Notebook paradigm turns out to be strong and general enough that we’ve been able to design Chat Notebooks so they fit coherently in with the rest of the system. And particularly for those (humans and AIs!) who know the existing system, it may be helpful to discuss some of the precedents and analogies for Chat Notebooks that exist elsewhere in the system.

A key feature of Chat Notebooks is the concept of using a different evaluator for certain notebook content—in their case, an LLM evaluator for chat cells. But it turns out that the idea of having different evaluators is something that’s been around ever since we first invented Notebooks 36 years ago. Back in those days a common setup was a Notebook “front end” that could send evaluations either to a kernel running on your local machine, or to remote kernels running on other (perhaps more powerful) machines. (And, yes, there were shades of what we’d now call “the cloud”, though in those days remote computers often had phone connections, etc.)

Right from the beginning we discussed having evaluators that weren’t directly based on what’s now Wolfram Language (and indeed at a programmatic level we provided plenty of access to external programs, etc.). But it was only when we released Wolfram|Alpha in 2009 that we finally had a compelling reason to think about integrating something other than Wolfram Language evaluation into the core user interface of Notebooks. Because then—through Wolfram|Alpha’s natural language understanding capabilities—we had a way to specify Wolfram Language computations using something other than Wolfram Language: ordinary natural language.

So this led us to introduce Wolfram|Alpha cells—where evaluation first interprets natural language you type, then does the Wolfram Language computation it specifies. You get a Wolfram|Alpha cell by pressing = when you create the cell (we also introduced the inline control= mechanism); then shiftenter does the evaluation:

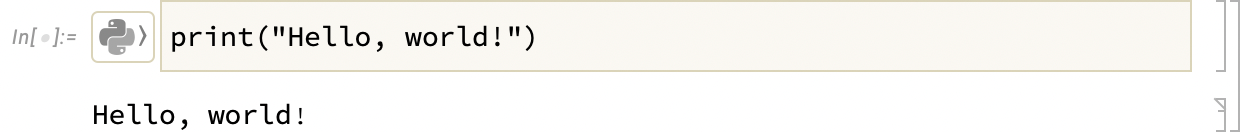

In 2017 (with Version 11.2) the notion of access to “other evaluators” from notebooks took another step—with the introduction of external evaluation cells:

And with this also came the notion of a menu of “possible evaluators”—a precursor to the personas menu of Chat Notebooks:

Then in 2019 came the introduction of Wolfram|Alpha Notebook Edition, with its whole framework around natural language “Wolfram|Alpha-style” input cells:

And in a sense this was the most direct precursor to Chat Notebooks. But now instead of having “free-form input” cells going to Wolfram|Alpha, we have chat cells going to an LLM.

At a programmatic level, ChatEvaluate (and LLMSynthesize) are in many ways not so different from CloudEvaluate, RemoteEvaluate or ParallelEvaluate. But what’s new is the Notebook interface aspect—which is what we’ve invented Chat Notebooks for. In things like ExternalEvaluate and ParallelEvaluate there’s a state maintained within the evaluator. But Wolfram|Alpha, for example, is typically stateless. So in Wolfram|Alpha Notebook Edition “state” is determined from previous cells in the notebook—which is essentially the same mechanism used in Chat Notebooks.

But one of the new things in Chat Notebooks is that not only are previous cells that are somehow identified as “input” used to determine the “state”, but other cells (like text cells) are used as well. And, yes, ever since Version 3 in 1996, there’ve been notebook programming constructs that have been able to process arbitrary notebook content. But Chat Notebooks are the first time “non-input” has been used in “evaluation”.

Most of the defining features of Notebooks—like cells, cell groups, evaluation behavior, and so on—have been there ever since the beginning, back in 1988. But gradually over the years, we’ve progressively polished the concepts of Notebooks—introducing ideas like reverse-closed cells, template boxes, input ligatures, etc. And what’s remarkable to see now is how Chat Notebooks build on all these concepts.

You can cut, copy, paste chat cells just like any other kinds of cells. You can close chat outputs, or reverse close chat inputs. It’s all the same as in the Notebook paradigm we’ve had for so long. But there are new ideas, like alternate outputs, chatblocks, etc. And no doubt over the months and years to come—as we see just how Chat Notebooks are used—we’ll invent ways to extend and polish the Chat Notebook experience. But as of now, it’s exciting to see how we’ve been able to take the paradigm that we invented more than 35 years ago and use it to deliver such a rich and powerful interface to those most modern of things: LLMs.